F.I.R.E. Up Agentic AI Adoption: A Systems Strategy for Lasting Impact

“You don’t rise to the level of your goals, you fall to the level of your systems.” — James Clear

Imagine a workflow where AI generates PRDs, mockups, and code, while humans use their expertise to provide context, architect and orchestrate systems, shape outputs, set the strategy, and make decisions.

I’ve been thinking deeply about how organizations can systemize AI adoption. This thinking has me developing F.I.R.E.: Foundations, Iteration, Review, Embed.

This framework guides teams from initial experiments to scalable, AI-assisted systems, positioning AI not merely as a one-off efficiency tool, but as a growth driver.

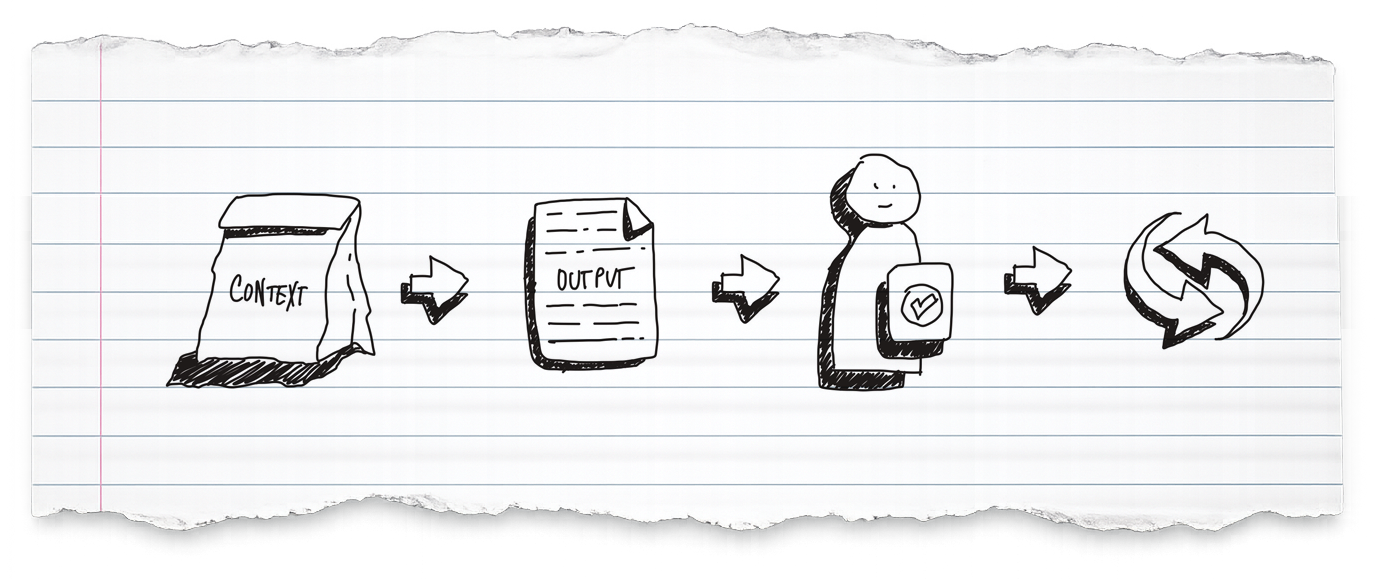

The system consists of:

- Inputs: context packs (static + dynamic)

- Outputs: AI-generated artifacts validated by humans

- Feedback: what worked, what didn’t, and what to change

Sparking Systematic Adoption with F.I.R.E

F.I.R.E. is a system for building AI into your workflows:

Foundations

Build the systems and context AI depends on. Standardized documentation, templates, and review processes ensure outputs are reliable and oversight is efficient. Without solid foundations, AI produces inconsistent results that require more human effort to correct than they save.

Iteration

Refine AI workflows continuously. Teams experiment, refine prompts and processes, and integrate AI into real work.

Treat your AI workflow like software: version prompts noting what changed and why, add constraints when the model drifts. Each cycle teaches you something about how AI performs in your specific context.

Acknowledge the Law of Diminishing Returns, don’t micro-optimize, once it’s reliable enough move on to the next workflow. But keep in mind that every workflow is a living document that may need updates as your needs or the model change.

Review

Maintain human-in-the-loop validation for output quality. AI has no stakes; it doesn’t live with the consequences of choosing the wrong problem or making the wrong tradeoff. The organization bears those consequences, which is why humans must remain accountable for direction and decisions, not just final delivery.

Embed

Institutionalize AI practices into organizational systems. What starts as experimentation becomes standard operating procedure, repeatable and sustainable across functions.

- Build a living playbook with documented patterns and prompts.

- Create closed-loop learning by feeding workflow quality back into Foundations and Iteration:

- Which context was missing that caused poor outputs?

- Which prompt patterns consistently produced usable results?

- How much time was spent correcting vs. building on what AI generated?

This is how you build confidence in the system over time.

F.I.R.E. isn’t just a checklist. It’s a strategy for designing AI-enabled workflows:

- Foundations set the stage

- Iteration builds capability

- Review ensures quality

- Embed institutionalizes practices

Foundations: The Context Supply Chain

In F.I.R.E., Foundations is the context and structure you feed AI so the output is reliably good.

Think of it as a supply chain problem: can you consistently assemble the right inputs to get the right outputs that deliver good outcomes?

Each AI-assisted workflow requires a Context Pack with two layers. We’ll use Product, Design and Engineering as an example:

Static context remains consistent across problems:

- agent instructions (prompt)

- business objectives

- design systems

- coding standards

- compliance requirements

Dynamic context changes based on the specific problem:

- market research for new products

- support tickets for improvements

- upstream artifacts like PRDs that flow to downstream specialized agents

For instance, a Product Discovery Agent receives static context (business objectives) plus dynamic context (customer signals). The Design Agent receives static context (design system) plus the PRD. The Coding Agent receives static context (coding standards) plus the design spec and PRD. Outputs from one stage become inputs for the next.

The agent instructions should make the agent a thought partner with well-defined goals that set clear expectations (more details on this in a future post):

- goals: what is its role and specific functions

- constraints: What should it NOT do

- output consistency: expected artifacts and output templates

- evaluation: how it evaluates its output quality

- thought partnership: encourage the agent to ask its human partner questions and be objective

From Upstream to Outcomes

The power of F.I.R.E. becomes clear when you trace a single feature through the entire product lifecycle.

Each stage sets up the next; the quality of upstream work directly determines what AI agents can accomplish downstream.

Product architects the problem. The team assembles a Context Pack with customer signals, business objectives, and constraints. AI generates a draft PRD with user problems, acceptance criteria, and scope. Through iteration, the team refines prompts and inputs based on output quality. Human review validates strategic alignment before the PRD flows downstream.

Design builds on that foundation. With the PRD as dynamic context and the design system as static context, AI-assisted tools generate mockups and annotated specs. The annotations are instructions that translate intent into implementable guidance. Designers review outputs, ensure they match PRD intent, and refine the workflow based on what works.

Engineering harvests the upstream investment. Here’s where the compounding effect becomes tangible. With well-structured PRDs, annotated design specs, documented design systems, and established coding standards, a technical spec agent can build the implementation spec for coding agents. A coding agent can then generate new capabilities (A/B tests, isolated functionality) with remarkable fidelity while Engineers drive system design and architecture.

In my experimentation, this approach can deliver 60-80% of an implementation, complete with unit tests and documentation. This is particularly effective for POCs and A/B testing, accelerating delivery and feedback while freeing engineers to focus on the long term decisions that matter most: system and architecture design, and the trade-off calls that require human expertise.

The moonshot emerges naturally. AI generates PRDs, mockups, and code. Humans architect the system, set strategic direction, and validate output quality. Feedback loops continuously improve context packs, prompts, and processes. Disciplines at each stage compound into a scalable workflow: humans as architects, AI as engine.

Start a F.I.R.E. in 30 Days

Week 1 — Foundations: Pick one workflow per function (e.g., Product, Design, Engineering). Define your Context Pack structure. Identify the static context (standards, systems, objectives) and dynamic context (signals, research, upstream artifacts), for example, start with PRD generation or code generation from a mockup.

Week 2 — Iteration: Build your first prompt bundle. A prompt without defined inputs and a clear output template is half-baked. Document the prompt, context requirements, output format, and evaluation criteria together.

Week 3 — Review: Run a real problem through the loop with explicit review gates. Humans validate strategic fit and output quality at each stage before artifacts flow downstream.

Week 4 — Embed: Hold a retro specifically on AI’s role: where it added value, where it slowed you down, and what to change. Document what worked and seed your playbook.

The System is the Strategy

The highest-leverage opportunity isn’t AI in any single function. It’s AI embedded across organizational systems, with foundations that make outputs reliable and repeatable. Within those systems, humans are architects: designing workflows, setting direction, and deciding which problems matter.

The gains you achieve downstream are a direct result of the context you assemble upstream. The organizations that capture AI’s full potential won’t be those that adopt it fastest. They’ll be those that build the systems to make it work.